From Monolith to Multi-Region Microservices: Automating Reliability at Scale

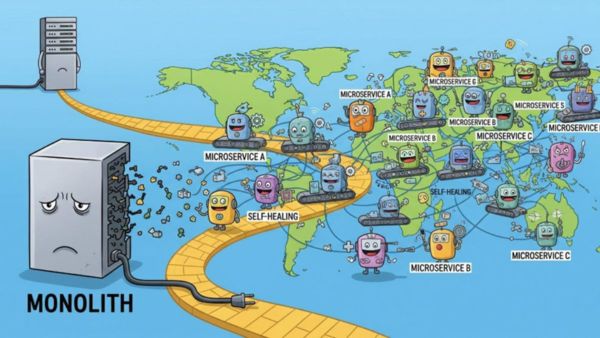

Within the age of global scaling and customers demanding instant gratification with services always available, architecture has become very big in business. We faced this challenge in a big re-architecture initiative: moving from a monolithic application to a distributed multi-region microservices system. But breaking the monolith was just one side of it; we also wanted the architecture to build-in reliability, observability, and self-healing from day one.

This article looks along the journey through its multi-phase, concentrating on the design patterns, operational resilience, and automation frameworks that enabled stability at scale.

1. Rethinking “Microservices” Beyond the Buzzword

Splitting the monolith was the easy part. The real hard thing was the redefinition of scoping and operating a service. Instead of just focusing on severe technical decomposition (e.g., separation of controllers from data logic), we decided to align services along business capabilities — Billing, Risk Engine, Policy Management — with each business capability having its own database, deployment pipeline, and SLOs for operations.

This shift gave teams real autonomy—they could build, test, and deploy independently. Sure, it came with trade-offs: service interfaces became contracts, so breaking changes needed coordination. But it was worth it. Incidents stayed isolated, debugging got easier, and we could scale services based on business needs—not monolithic limitations.

2. Multi-Region Isn’t Just About Duplication—It’s About Design

In our multi-region deployment, we went ahead and tackled synchronization, latency balancing, and chaos resilience. The stateless services were being run in an active-active mode using Route53 latency-based routing, whereas the stateful ones like session tracking and payments were under workflows powered by Cadence that had built-in retry and rollback.

In other words, instead of just blindly duplicating everything, our services are aware of which region they are currently operating in, and we added fallback logic and caching wherever it was necessary. Now here is the reward: During a tag-team AWS outage, failover completed in less than 60 seconds—with zero impact on customers, not a single support ticket was generated.

3. Reliability by Automation: Building Self-Healing Systems

Reliability wasn’t an afterthought—it was baked into the design from day one. We built an “autopilot” system using Cadence workflows and Prometheus SLIs to trigger automatic fixes when things went off-track—like restarting pods, rerouting traffic, or scaling across regions.

The best part? Recovery logic was declarative, so teams could define it as code. This cut MTTR by 75% and gave on-call engineers real peace of mind—no more waking up for issues the system could fix itself.

4. Tooling as an Enabler, Not a Silver Bullet

Tools like Docker, Terraform, Kafka, and OpenTelemetry almost became household names, but one of the lessons was that the real value comes from applying the tools to one's domain.

We injected region-awareness into modules in Terraform so that deployments stayed consistent yet could be customized to a degree. Our Docker images were not only tagged by the version but also by combinations of feature flags and region-specific configuration-tags, which evolved into rollbacks targeting combinations. We got basic tracing out of OpenTelemetry; the custom spans helped visualize business events instead of just HTTP calls.

One of the more impactful changes? Replacing dumb HTTP health checks with semantic probes, monitoring things like encryption key rotation, queue health, and DB sync status. And those checks became live dashboards and auto-remediate triggers.

Final Thoughts

Architecture at scale deals with trade-offs; however, by adjusting one's thinking, it can also be about automation, ownership, and confidence. Our journey from a single-region monolith to an automated globally resilient platform was never easy—but the steps had to be taken. And they have been transformative.

If yours is a team considering this path, here is a reminder: reliability must be designed; automation should be a deliberate outcome; treated tools must be suitably modified.

Click it and Unblock the Notifications

Click it and Unblock the Notifications

-1763362932432.svg)