Here’s How Facebook Will Stop The Future Of Crime – Deepfakes

Facebook finds itself in soup for privacy and security fiascos more often than not. However, its latest move might be in the right direction to curb something that could potentially be the most dangerous crime of the future - Deepfakes.

The social media goliath has created a model that can tell whether a video is using deepfake. Not just that, it can also determine the algorithm used to make that video. While the idea sounds interesting, will it be able to keep deepfakes at bay? Before we delve into the details let's understand deepfakes first.

What Is A Deepfake Video?

Have you ever come across a video where a highly influential person is speaking something out of the ordinary? If yes, then you might already have seen a deepfake video. For instance, there's a video of Barak Obama calling Donald Trump a "complete dipshit" or Mark Zuckerberg talking about controlling the future with data of billions of users, and so on. Well, those are all deepfakes.

The term "deepfake" means a video where AI and deep learning, an algorithm training method that trains computers, are used to make a person appear to speak something they actually haven't. Although deepfakes are still relatively new, experts believe that they have the potential to be the most dangerous crime of the coming days.

Many of these deepfake videos are pornographic. An AI firm Deeptrace found 15,000 deepfake videos on the web as of September 2019, out of which 96% were obscene. 99% of the videos had female celebrity faces mapped out on porn stars.

How Will Facebook Detect Deepfake?

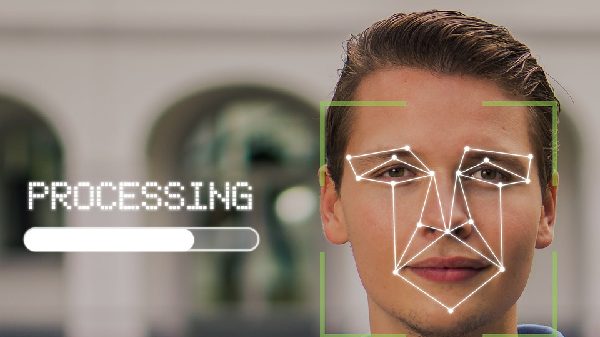

To detect a deepfake video, it's imperative to tell whether an image is real or not. However, there's a limited amount of information available for researchers to do so.

Facebook's latest process detects the unique patterns behind an AI-ready model that could create a deepfake. The suspected image is put through a network that looks for imperfections when the deepfake was created. These imperfections could be anything from noisy pixels to disturbed symmetry and can be used to find its ‘hyperparameters'.

"To understand hyperparameters better, think of a generative model as a type of car and its hyperparameters as its various specific engine components. Different cars can look similar, but under the hood they can have very different engines with vastly different components", Facebook said in a blog post.

"Our reverse engineering technique is somewhat like recognizing the components of a car based on how it sounds, even if this is a new car we've never heard of before."

Why Is It Necessary?

Since deepfake software is customizable, it enables people with nefarious intentions to hide themselves. Facebook claims that it can detect whether a media file is a deepfake just by using a single still image. It detects which neural network was used to make the video, and it can be used to find the person or group that was behind the deepfake.

"Since generative models mostly differ from each other in their network architectures and training loss functions, mapping from the deepfake or generative image to the hyperparameter space allows us to gain critical understanding of the features of the model used to create it", Facebook explains.

While the new technique doesn't necessarily mean that deepfakes will be gone, as they are still evolving. And knowing that technology to detect them exists, malicious individuals might have already started planning their way around it.

However, the new model will come in handy for engineers better identify current deepfake videos, and further make the system capable of detecting even more advanced deepfakes.

Click it and Unblock the Notifications

Click it and Unblock the Notifications

-1763362932432.svg)