Predicting Airline Delays at Scale with Spark ML

Every delayed flight creates massive network-level disruptions — crews go out of rotation, gates get blocked by planes unable to depart or arrive as scheduled, passengers miss connecting flights — all pushing operating costs into billions each year. Aware of this, airlines still struggle with accurately predicting which flights will incur delays far enough in advance for them to take any action.

In this article, Abhinav Sharma, as part of a four-member data science team at UC Berkeley, California, walks us through how his team used Apache Spark ML to train large-scale classifiers on tens of millions of flight records, achieving an F1 of approximately 0.9 in predicting whether a flight will depart more than 15 minutes late, two hours before its scheduled off-block time.

Framing the prediction problem

“We framed the task as a binary classification problem. Given what is known two hours before scheduled departure, the objective is to accurately predict if a flight will depart over 15 minutes behind schedule or be canceled.” The authors' choice of the 15-minute threshold aligns with the aviation industry’s definition of “operationally meaningful delay” as found in prior research in this domain.

To evaluate the model’s performance, they chose F1 score as their primary metric. “Because the on-time flights heavily outnumber the delayed flights, it creates a class-imbalance that can bias ML models, making accuracy a misleading metric.” In such scenarios, Abhinav explains, “the F1 score balances both; the airline's objective to avoid missed delays (high recall), and the operating costs of false alarms (high precision).” Optimizing purely for accuracy would have been misleading because about 80% of all flights leave on time, so a naive 'always on-time' model can look good numerically while being operationally useless.

Building a multi‑source delay dataset

Describing the construction of the dataset, Abhinav comments, “We began our study by using the United States' Department of Transportation's on-time performance data for all domestic flights. Additionally, we joined this with the National Oceanic and Atmospheric Administration (NOAA) weather observation and weather station metadata.” The U.S. Department of Transportation data has flight schedules and actual departure times, along with detailed delay causes. Using open airport information (time zones, codes) the authors synced the weather and flight timelines to make better predictions.

Next, the authors engineered additional high-signal features of the data by calculating route level historical averages of delay rates and also rolling statistics of previous day(s) (or week) delays for a specific Tail Number (TN), Flight Number (FN), etc. They included basic indicators that identify whether today is a weekend or legal holiday which are common knowledge in regards to demand and traffic. These features allow the model to capture not just 'what is currently scheduled' but 'how this route and aircraft tend to behave under similar conditions.’

Why we needed Spark ML

At full scale, the final dataset included tens of millions of individual flight records over multiple years and hundreds of millions of weather observation records linked at the station and time level. Thus, training even basic models over such a large volume of data will quickly saturate a machine and make the iteration process extremely painful.

Abhinav used Databricks along with PySpark ML to distribute the feature creation and classifier training tasks among multiple nodes in a cluster. Additionally, he stored the intermediate results in Parquet format (for columnar storage) so that it would be possible to efficiently read them back into memory when needed.

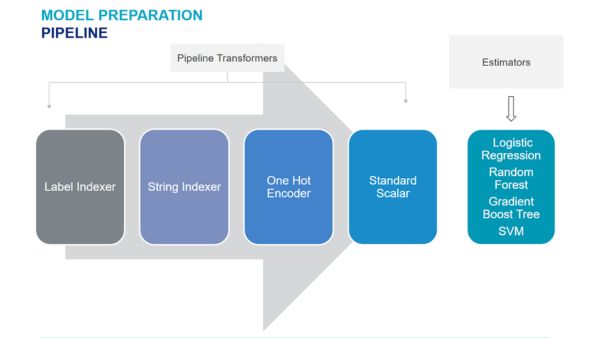

Spark ML pipelines encapsulated all the preprocessing steps — string indexing (converting strings to vector representations), one-hot encoding (transforming categorical variables into numerical ones), scaling numeric features, and creating either dense or sparse vectors from them — allowing them the option to quickly switch between different classifiers without having to recreate a new data flow for each one.

Handling time and class imbalance correctly

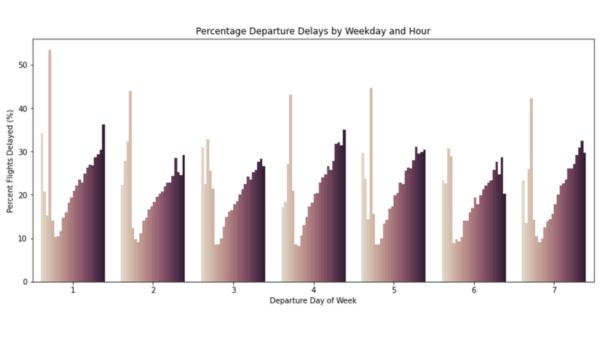

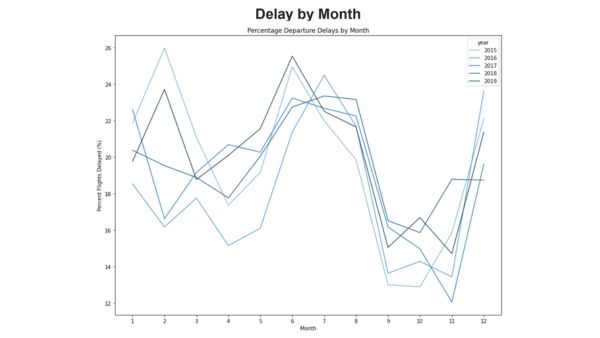

Flight delay data is very seasonal and there exists significant temporal and class (arrival status) skewness. Roughly 80% of all flights are on-time. Delays follow strong time-of-day, day-of-week, and seasonal patterns. Since random train/test splits can introduce future information into the past and pollute the data, the team chose to use time-aware train/test splits; i.e., they ensured that models are always trained using earlier months than the months being tested - a method that mirrors airline model usage in production environments.

To handle class imbalance, the team chose to use a form of under-sampling during training. The majority class (on-time flights) was randomly selected down in size while the test sets remained unchanged. This has been demonstrated in previous research on flight delay prediction (as well as other forms of imbalanced learning). While there are many methods available to address imbalanced data including oversampling or generating artificial positive examples, it has been shown that oversampling (i.e., creating additional examples) does not improve predictive accuracy for the minority class but rather artificially improves performance. This simple strategy significantly improved recall on delayed flights without artificially inflating performance through synthetic oversampling of rare events.

Comparing four scalable classifiers

Abhinav and the team implemented and compared four Spark‑native models that can all scale to tens of millions of rows:

- Logistic Regression

- Random Forest

- Gradient-Boosted Trees

- Linear Support Vector Machine (Linear SVC)

Logistic regression provided a good base model: it is quick to train, relatively simple to regularize and its output coefficients are easily interpretable as approximate weights for each feature's importance. On the other hand, tree-based models (e.g., random forest (RF), gradient-boosted (GBT)) allow the detection of nonlinear relationships in the data and provide an alternative method of handling many of the engineered categorical and aggregated features with greater flexibility. Finally, the authors implemented and compared another margin-maximizing classifier (i.e., linear SVM) that allowed them to directly compare against logistic regression using the same transformed feature space.

Speaking of the models’ performance and results, Abhinav recalls, “All four models achieved high F1 scores (in the 0.8-0.9 range) when tested across multiple time frames and feature hypotheses on the unbalanced test data. Interestingly, logistic regression ranked at the top, peaking at an F1 of around 0.91 for our best feature set. GBT and RF models performed similarly and were able to close most of the gap with the introduction of richer, domain‑specific features, with F1 reaching the high 0.8s. These more complex models, of course, come with trade-offs around compute requirements, training time and interpretability of results.”

What actually moved the needle

Several feature families were identified as being particularly strong in predicting delays, reiterating and building upon what past research studies had found:

- Historical route and carrier performance: The historical delay patterns of routes and airlines — represented through rolling averages and delay percentages — provided the models with a "prior" expectation of how “risky” a specific flight would be even before cross-referencing with current day factors.

- Short-horizon congestion signals: Features summarizing delays 2–4 hours before scheduled departure time at either the originating airport or for the inbound tail number helped identify potential congestion and rotation issues.

- Calendar/holiday effects: Simple encodings of day-of-week, month and major holidays that are significant for travel (e.g., New Year’s Day, Christmas), helped capture peak periods of travel and thus identifying increased demand, staffing complexity and potential delay risks.

It is interesting to note that incorporating weather features resulted in greater increases in performance for boosted tree models compared to logistic regression. This observation is consistent with other research work that has shown that non-linear methods are better at identifying interactive effects between weather factors and operational factors. “For production environments,” Abhinav explains, “a combination of a well-regularized linear baseline model with a more expressive tree-based model can provide improved robustness and incremental lift from the predictive model.”

Turning scores into airline decisions

Talking about the decision impacts of the model scores, Abhinav shares, “A classifier that has a good (high) F1-score will only help when the outputs of the model translate into actionable results. In our setup, each prediction corresponds to a specific flight that has a scheduled departure time of approximately two hours in the future. The model gives both a binary prediction of delay/no-delay label for the flight as well as a numerical probability score of that prediction.”

Abhinav further clarifies, “An airline has the ability to dial this model by setting its decision threshold to favor either “recall” (capture more true delays at the cost of false alarms), or “precision” (notify only when risk confidence is high, but miss some events). For example, an operations team may wish to be overly cautious during periods of congestion (e.g. hub airports during peak holiday travel), accepting greater numbers of false positive alerts. Conversely, they may choose to operate under a more aggressive decision threshold during periods of light usage (e.g. less busy routes) where schedule buffers are greater and passengers are less impacted by individual delays.”

Lessons for building large‑scale delay predictors

A number of key takeaways are evident from this project for organizations that wish to implement a similar system:

- Feature engineering beats blind complexity: Careful feature design is better than blindly adding complex layers. The development of carefully designed temporal and operational features (those based on real-world airline operations), delivered more model performance than simply moving to deep learning as has been seen in other studies on flight delays.

- Simple models scale and generalize well: The logistic regression model trained on well-engineered features provided top-of-the-line F1 scores compared to more sophisticated machine learning models. More importantly, it also remained simple enough to train daily on fresh data and easily explainable to business stakeholders.

- Time‑aware validation is non‑negotiable: Using an expanding time window for training/testing datasets was useful in preventing information leakage, as well as seeing how the model would perform on future traffic patterns.

- Spark pipelines make experimentation production‑friendly: Employing Spark ML pipeline for all of the preprocessing and modeling steps allowed the team to use the exact same code base for both research and eventually production environments, reducing the model/concept drift.

Taken as a whole, this approach enabled Abhinav and his team to train and evaluate multiple large-scale models on approximately 30 million flight records; it also enabled them to demonstrate how airlines can forecast with high accuracy which departures will be delayed several hours prior to their scheduled departure times — turning a network-wide operational pain point into a more manageable and data-driven problem.

Follow along the full analysis and findings here: https://github.com/abhisha1991/w261_final_project/tree/main

About the Researcher

Abhinav is currently a Staff ML Engineer at Docusign, where he focuses on advancing document-intelligence capabilities to drive adoption of the Intelligent Agreement Management (IAM) platform. Since its launch in June 2024, the platform has processed hundreds of millions of agreements, with Abhinav playing a key role in shaping the AI systems that enable this scale. Prior to Docusign, Abhinav worked at Microsoft’s headquarters in Redmond, WA, contributing to core cloud and infrastructure initiatives across Azure Networking and Azure AI Search. His work supported both the foundational backbone of Microsoft Azure and the growth of Azure AI Search during the pre-LLM era.

Abhinav holds master’s degrees from the University of California, Berkeley and Columbia University, where he specialized in data science, information retrieval, machine learning, distributed systems, and operational excellence. He also earned a bachelor’s degree in Computer Science from Manipal University, building a strong foundation in core computer science principles and programming. Outside of work, Abhinav enjoys playing the guitar, traveling, exploring new places, and learning about aviation systems.

Click it and Unblock the Notifications

Click it and Unblock the Notifications

-1763362932432.svg)