Google Celebrates Global Accessibility Awareness Day with Major Android and Chrome Updates

In celebration of Global Accessibility Awareness Day, Google announced a host of new accessibility features for Android and Chrome, designed to make technology more inclusive and user-friendly. The updates, which are powered by AI technologies like Gemini, are aimed at improving experiences for users with disabilities, especially those with vision, hearing, and speech impairments.

From more intuitive screen readers to real-time captioning that captures emotion, Google's new tools are set to redefine digital accessibility. Here's everything you need to know.

AI-Powered Enhancements for Android

Google has been integrating its Gemini AI into core Android features to improve accessibility for users with vision and hearing challenges. According to Angana Ghosh, Director of Product Management for Android, these updates are designed to make everyday interactions smoother and more intuitive.

TalkBack Gets Smarter with Gemini AI

One of the most significant updates is the expansion of Gemini AI within TalkBack, Android's built-in screen reader. Originally introduced last year to provide image descriptions, TalkBack now allows users to ask questions about the content on their screen.

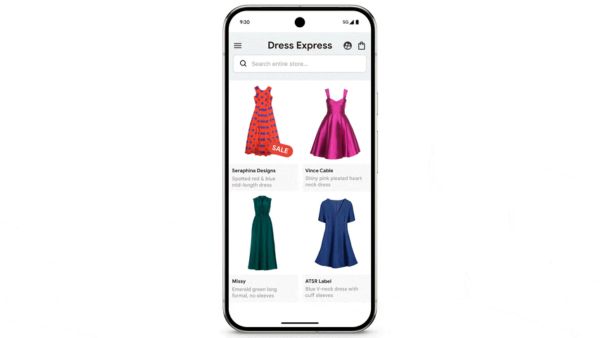

Imagine receiving a photo of a guitar from a friend. With the new TalkBack, you can ask what type of guitar it is, its color, or even if there are any visible details that might not be obvious at first glance. The same goes for shopping apps-you can now ask about the material of an item or check for ongoing discounts without needing to navigate complex menus.

This move makes TalkBack more interactive and conversational, giving users deeper insight into visual elements without relying solely on pre-existing alt text.

Expressive Captions: Real-Time Emotion and Sound Recognition

Another game-changing addition to Android is Expressive Captions. Unlike traditional captioning, this feature goes beyond simple transcriptions. It captures the tone and emotion behind spoken words-like stretched sounds and enthusiasm-so users get more context.

For example, if someone shouts "Amaaaaazing!" during a sports highlight, Expressive Captions will reflect that stretched sound. It even recognizes subtle sounds like whistling or throat clearing, providing richer context for those who rely on captions.

The feature is currently rolling out in English to users in the United States, United Kingdom, Canada, and Australia on devices running Android 15 or newer.

Expanding Speech Recognition with Project Euphonia

Google's commitment to accessible technology isn't limited to vision and hearing; it also extends to speech recognition. Launched in 2019, Project Euphonia aimed to make speech recognition more accessible for people with atypical speech patterns.

Developer Tools for Customized Speech Models

To broaden its reach, Google is now offering open-source resources through Project Euphonia's GitHub page. These tools enable developers to build personalized audio applications and custom speech models that can better understand diverse speech patterns.

This is a significant step toward making voice commands and virtual assistants more inclusive, especially for people with speech disabilities or non-standard accents.

African Language Support Through CDLI

Earlier this year, Google partnered with University College London to launch the Centre for Digital Language Inclusion (CDLI). This initiative focuses on developing open datasets for 10 African languages and building speech recognition technologies for non-English speakers.

The goal is to support local developers and communities, creating speech tools that better understand the nuances of African languages. This expansion aligns with Google's broader vision of making its technologies more globally accessible.

New Accessibility Features for Students

Google is also extending its accessibility efforts to education. Students using Chromebooks now have more options to navigate and interact with their devices through features like Face Control-which lets users operate their Chromebooks with facial gestures-and Reading Mode for customizable text display.

Additionally, College Board's Bluebook testing app, which is used for SAT and AP exams, now supports Google's ChromeVox screen reader and Dictation, offering students with disabilities greater accessibility during high-stakes tests.

Chrome Gets a Boost in Accessibility

Google's Chrome browser, used by more than two billion people daily, is also seeing improvements in accessibility.

Enhanced PDF Interaction with OCR

One of the standout features is Optical Character Recognition (OCR) for scanned PDF files. Previously, screen readers couldn't interpret text in scanned PDFs, but with this update, Chrome now automatically recognizes text, allowing users to search, highlight, and read aloud scanned documents just like any other web page.

This is particularly useful for students and professionals who rely on screen readers to navigate academic papers and official documents.

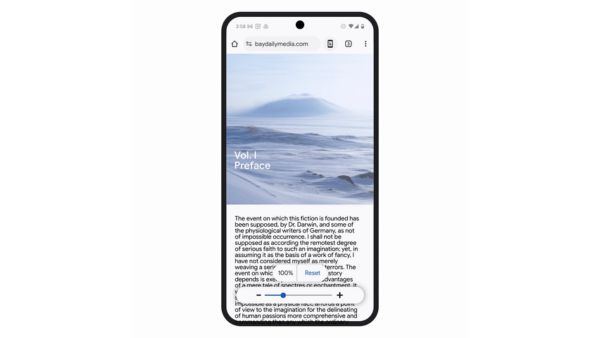

Page Zoom on Android

Another significant upgrade is the addition of Page Zoom in Chrome on Android. Users can now increase text size without affecting the page layout.

This feature is customizable for individual websites or applied universally, making browsing more comfortable for those with visual impairments.

Click it and Unblock the Notifications

Click it and Unblock the Notifications

-1763362932432.svg)