WWDC 2025: From Live Translation to Workout Buddy, Here’s Everything New in Apple Intelligence

At WWDC 2025, Apple introduced major updates to Apple Intelligence, its on-device AI system. New features like live translation, visual tools, and creative updates are coming to iPhone, iPad, Mac, Apple Watch, and Vision Pro. Developers can also tap into Apple's AI model for the first time.

Public rollout is expected later this year.

Conversations in Multiple Languages with Live Translation

Apple Intelligence adds Live Translation to Messages, FaceTime, and phone calls. This feature allows real-time translation of text and voice conversations between different languages. In Messages, for example, your typed text can be translated automatically before it's sent. On calls, translated captions or spoken translations help users follow along without switching apps.

All translation is handled on-device, so no content is sent to cloud servers, which is in line with Apple's privacy-focused design.

Smarter Screens: Visual Intelligence on iPhone and iPad

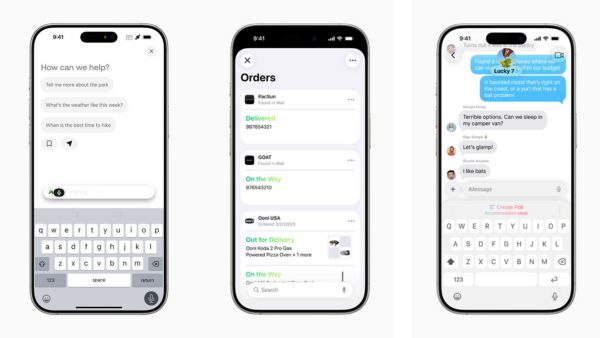

Visual Intelligence brings AI context awareness to what users are viewing on-screen. When reading a product review or browsing an event invite, users can trigger actions like searching for similar items on platforms like Etsy or Google, or adding the event to their calendar with pre-filled details.

This feature can be activated by the same buttons used to take a screenshot. It also works with Apple's ChatGPT integration, so users can ask questions about on-screen content without copying or pasting.

Custom Emoji and AI Images Get More Flexible

Apple is also updating Genmoji and Image Playground. You can now combine multiple emojis or describe a specific look to generate a new one. Genmoji can reflect facial features, expressions, or hairstyles, making it easier to create likenesses of friends or family.

Image Playground adds support for different visual styles, such as oil painting or vector art. Users can describe what they want, and Image Playground will create it, with the option to send data to ChatGPT only when approved by the user.

Apple Watch Gains a Personalized Fitness Coach

Workout Buddy is a new feature coming to Apple Watch. It uses Apple Intelligence to provide real-time, personalized feedback during workouts. The system draws from your fitness history, current stats, and other metrics like heart rate and activity rings. It then generates spoken feedback using a voice modeled after Fitness+ trainers.

Workout Buddy supports common exercises such as outdoor running, walking, cycling, HIIT, and strength training. It requires Bluetooth headphones and an Apple Intelligence-supported iPhone nearby.

Shortcuts Now Integrate Directly with Apple Intelligence

The Shortcuts app is being upgraded to work directly with Apple Intelligence. This allows users to create automated workflows that can generate summaries, compare notes, or create images. For instance, a student can use Apple Intelligence to compare lecture transcriptions to their notes and highlight missing points.

Users can choose whether a shortcut runs entirely on-device or taps into ChatGPT for more complex requests.

Developers Can Now Use Apple's On-Device AI Model

One of the more notable updates is the introduction of the Foundation Models framework. This gives developers access to the same on-device AI model powering Apple Intelligence, letting them build intelligent features that work offline and don't require cloud APIs.

The framework supports Swift and is designed to be easy to use. Developers can implement features such as summarizing content, answering questions, or generating creative text and images with just a few lines of code. Since the model runs on-device, it avoids the need for external server calls or additional infrastructure.

Other Features Across the Apple Ecosystem

Several smaller features are also being rolled out:

- Apple Wallet can summarize order tracking information from merchant emails

- Messages can suggest a poll in group chats when relevant

- New personalization options allow users to customize chat backgrounds with AI-generated images

- Priority Messages in Mail highlight urgent emails, and Notification Summaries condense long threads

- Photos and Notes now support natural language search and summarization of transcription data

- Siri is more conversational, can retain context across requests, and can also be typed into for silent interactions

Focus on Privacy with On-Device and Private Cloud Compute

Apple continues to frame its AI approach around privacy. Most AI tasks will be processed on-device. For more complex tasks, Apple will use a Private Cloud Compute system that keeps user data isolated and temporary.

According to Apple, the code used in these servers is publicly inspectable, allowing third parties to verify that data isn't stored or misused.

Supported Devices and Availability

Apple Intelligence is available now through the Apple Developer Program and will launch as a public beta next month. It will be officially available this fall for the following devices:

- iPhone 16 models

- iPhone 15 Pro and 15 Pro Max

- iPads and Macs with M1 or newer chips

- iPad mini (A17 Pro)

To use Apple Intelligence, both Siri and device language must be set to a supported language. Initially, this includes English, French, German, Japanese, and a few others, with more languages like Turkish, Vietnamese, and Dutch to be added later this year.

Some features may not be available in all countries due to local regulations.

Click it and Unblock the Notifications

Click it and Unblock the Notifications

-1763362932432.svg)