Google DeepMind Unleashes Gemini Robotics: AI-Powered Robots That Think, Adapt, and Act!

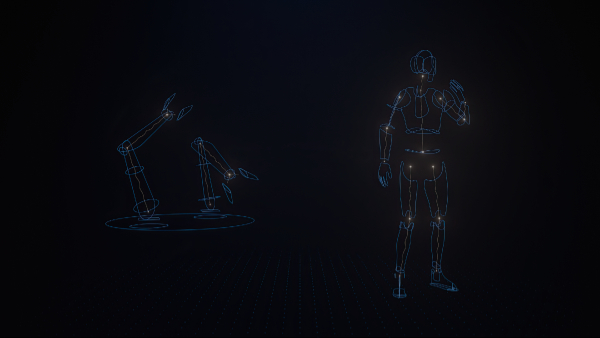

Google DeepMind has unveiled two innovative AI models, Gemini Robotics and Gemini Robotics-ER, based on the Gemini 2.0 framework. Initially introduced in 2023, Google's AI model, Gemini, has since evolved significantly. These new models aim to empower robots to mimic human actions effectively. Google emphasises the importance of "embodied" reasoning for AI to be truly beneficial in the physical world.

Gemini Robotics is a sophisticated vision-language-action (VLA) model developed from Gemini 2.0. It introduces physical actions as a new output modality for direct robot control. This model excels in three key areas: generality, interactivity, and dexterity. It adapts to new situations and interacts effectively with both people and its environment.

Gemini Robotics: Enhancing Robot Interaction

The model can perform delicate tasks like folding paper or unscrewing bottle caps. It comprehends a wide range of natural language instructions and adjusts its actions accordingly. Constantly monitoring its surroundings, it detects changes and adapts as needed, enhancing collaboration with robot assistants in various settings.

Google highlights that robots vary in shape and size, so Gemini Robotics is designed for adaptability. The model was primarily trained on the bi-arm robotic platform ALOHA 2 but can also control platforms based on Franka arms used in academic labs.

Gemini Robotics ER: Advancing Spatial Reasoning

Alongside Gemini Robotics, Google introduced Gemini Robotics ER. This model enhances spatial reasoning crucial for robotics and integrates with existing low-level controllers. It significantly improves abilities like pointing and 3D detection by combining spatial reasoning with coding capabilities.

For instance, when shown a coffee mug, the model instinctively determines how to grasp it with two fingers on the handle and plans a safe approach path. It performs all necessary steps for robot control, including perception, state estimation, spatial understanding, planning, and code generation.

The introduction of these models marks a significant advancement in AI-driven robotics. By focusing on embodied reasoning and adaptability, Google DeepMind aims to create robots that interact seamlessly with their environment and perform tasks efficiently.

Click it and Unblock the Notifications

Click it and Unblock the Notifications

-1763362932432.svg)