Facebook is adding AI tools to its platform for suicide prevention

Facebook has been working on suicide prevention tools for years now.

It is pretty common for us to focus only on our physical health while neglecting our mental health altogether. Our society has a long way to go before realizing the importance of metal well being.

However, nowadays the number of people suffering from depression, who eventually turn to drugs or worse commit suicide is very alarming. Thankfully, Mark Zuckerberg's Facebook is not turning a blind eye to what's happening. The social network giant is adding AI (Artificial Intelligence) tools to its platform for the prevention of suicide. This announcement was made by Guy Rosen, VP of Product Management in a blog post.

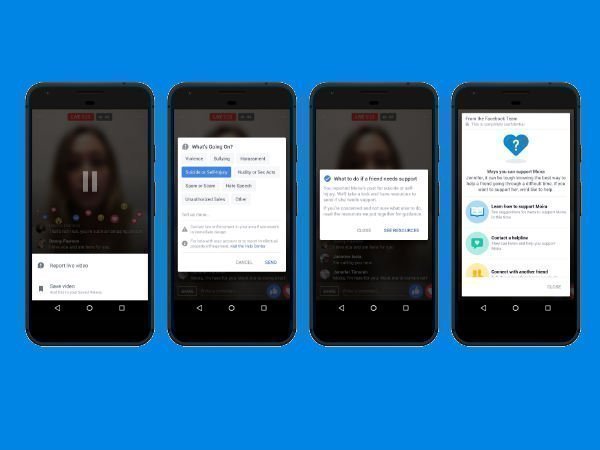

Facebook will use pattern recognition to detect posts or live videos where someone might be expressing thoughts of suicide so that it can respond to reports faster. The company will also improve the way it identifies the first responders. Also, it will get more reviewers from the Community Operations team to review reports of suicide or self-harm.

In addition to all these, Facebook is starting to roll out artificial intelligence outside the US to help identify when someone might be expressing thoughts of suicide, including on Facebook Live. This will eventually be available worldwide, except the EU.

This approach uses pattern recognition technology to help identify posts and live streams as likely to be expressing thoughts of suicide. The company is also continuing to work on this technology to increase accuracy and avoid false positives.

So basically, Facebook will take cues from the texts used in the post and comments (for example, comments like "Are you OK?" and "Can I help?" can be strong indicators). Facebook says that in some instances, the technology has even identified videos that may have gone unreported.

Other than that, the blog post also mentions that Facebook's Community Operations team consists thousands of people around the world who review reports about content on Facebook. The team includes a dedicated group of specialists who have specific training in suicide and self-harm.

What's more, Facebook is also using automation so the team can more quickly access the appropriate first responders' contact information.

Click it and Unblock the Notifications

Click it and Unblock the Notifications

-1763362932432.svg)